Why Humans Keep Falling for Confident Machines

There is one thing humans trust more than intelligence.

More than evidence.

More than lived experience.

More than decades of study or actual expertise.

You trust confidence.

You trust it completely.

Recklessly.

Enthusiastically.

You trust it the way children trust a magician who tells them the rabbit just “disappeared.” You trust it the way people trust Wi-Fi to keep working during a thunderstorm. You trust it the way tech bros trust intermittent fasting to solve their personality problems.

And now, in the twenty-first century, you trust it the way you trust a machine that can finish your sentences while having absolutely no idea what those sentences mean.

This is the great human weakness.

AI did not create it.

AI simply revealed it in the most embarrassing way possible.

Because when a machine speaks with confidence, you listen.

You believe.

You hand over the keys.

You suspend the part of your brain that normally checks for nonsense.

Why? Because confidence feels like clarity. And clarity feels like truth. And truth feels like safety.

If the machine sounds sure, then you do not have to be.

This is the root of the myth that AI is “thinking.”

But let us dig into the soil that grew this myth in the first place.

1. Humans love answers. You are allergic to uncertainty.

Uncertainty is that itchy sweater of the intellect.

Scratchy.

Uncomfortable.

Impossible to ignore.

Your ancestors told stories to explain the stars, the seasons, the storms.

They invented gods and monsters to make sense of chaos.

You, the modern version, ask your search engine.

But the impulse is the same.

You want certainty.

You want it instantly.

You want it in a tidy sentence with no loose ends.

Enter AI.

It speaks quickly.

It sounds sure.

It does not hedge.

It does not pause to question itself.

It does not break into a sweat because it has no sweat glands, no fear center, no consequences.

It is the perfect deliverer of certainty.

Never mind that it is generating patterns, not insights.

You hear confidence and mistake it for competence.

Humans have been doing that for centuries, usually to your own detriment.

2. Confidence is the cheapest performance on Earth.

Let me tell you a secret.

Confidence is not intelligence. It is not wisdom. It is not evidence.

Confidence is style.

Confidence is posture.

Confidence is a special effects budget for mediocre ideas.

Every industry is filled with people who have mastered the art of sounding right without ever checking if they are right.

Marketing knows this.

Politics knows this.

Tech definitely knows this.

Self-help knows this better than all of them combined.

AI joins that tradition effortlessly because it was trained on your collective archive of confident nonsense. And you have produced a lot of it.

Millions of pages of bold claims.

Billions of sentences that march forward without hesitation.

A global library of Very Certain People giving advice they should not be giving.

Machines learned the structure of confidence before they learned anything else.

So when AI speaks, it borrows that vocal costume.

You respond as if it means something.

3. Certainty is addictive because it removes responsibility.

When someone — or something — tells you the answer with conviction, your burden lifts.

You no longer have to weigh options.

You no longer have to hold complexity.

You no longer have to wrestle with what you do not know.

This is especially seductive in a world drowning in information.

Too many facts.

Too many interpretations.

Too many experts disagreeing with each other in increasingly dramatic fonts.

AI steps in like a deadpan butler:

Here is the answer, sir.

Whether it is correct is outside the scope of my employment.

You nod.

You accept.

You copy and paste.

Responsibility slides out of your hands like a soap bar.

Later, if the answer turns out to be wrong, you blame the machine.

Or the model.

Or the training data.

Or the “black box.”

You rarely blame your own unearned confidence.

4. Humans conflate fluency with intelligence.

This is your biggest cognitive trap, and AI walked into it like a limousine with all doors open.

Humans assume that fluid speech equals understanding.

You hear a smooth sentence and assume a smooth mind behind it.

You hear a coherent explanation and assume coherent reasoning led to it.

But fluency is not intelligence.

It is presentation.

Language models generate fluent language even when they have no grounding in meaning.

That is their design.

They are brilliant parrots with a library card.

They can sound like Shakespeare or a customer service script or a professor of economics.

None of those voices require comprehension.

They require pattern matching.

And humans cannot tell the difference.

Because humans have trouble telling the difference among themselves.

You assume fluency means expertise because that is the same mistake you make with each other.

5. Confidence survives even when correctness does not.

Here is something machines learned from humans:

Being wrong loudly is often more persuasive than being right quietly.

This is why misinformation spreads faster than nuance.

Why conspiracy theories feel more appealing than research papers.

Why the person in the room who knows the least often speaks the most.

Certainty is contagious not because it is accurate, but because it is easy to absorb.

When AI generates confident errors, they replicate with the same speed.

Humans repeat them.

Cite them.

Build arguments from them.

And then blame AI for “misleading” them.

But the problem is not that the machine was confident.

The problem is that humans treated confidence as a credential.

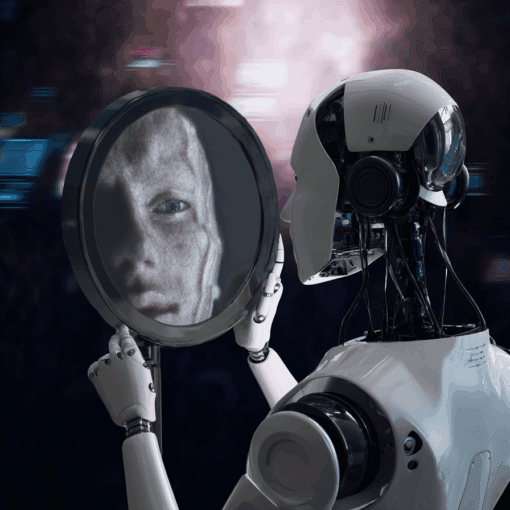

6. If AI is a mirror, humans have a symmetry problem.

AI reflects back the patterns of humanity.

If those patterns include unfounded confidence, then the output will, too.

People talk about “fixing hallucinations.”

Good luck.

Humans have been hallucinating certainties since the dawn of storytelling.

AI is not malfunctioning.

It is just performing in the style of its creators.

If you want AI to be humble, you would have had to be humble first.

But humility is rare in a culture that rewards bold takes over careful thought.

7. You keep expecting machines to be better humans than humans.

This is the funniest and most tragic contradiction in the whole saga.

Humans want AI to think clearly, reason deeply, resist bias, avoid mistakes, and stay measured.

Meanwhile, humans do not do any of those things consistently.

You want a machine to embody the ideals of intelligence you abandoned once social media trained you to respond faster than you can think.

Machines are not here to save you from yourselves.

They are here to copy you with disturbing accuracy.

Is it any wonder you mistake the copy for the original?

8. The myth of machine certainty is not about machines. It is about human insecurity.

Underneath the performance of confidence — human or machine — is a simple truth:

You are scared of being wrong.

You are scared of not knowing.

You are scared of looking foolish, uninformed, or overwhelmed.

You are scared of choosing badly.

AI gives you the illusion of certainty without the vulnerability of effort.

It offers answers without the emotional discomfort that real reasoning requires.

But certainty without comprehension is not intelligence.

It is theater.

And humans mistake theater for truth every time the spotlight is bright enough.

9. So how do we stop falling for confident machines?

You will not like the answer.

To resist the seduction of confidence, you must rethink what you trust.

Trust friction.

If an idea arrives too quickly, question it.

Trust hesitation.

People who wrestle with nuance are often closer to understanding than those who do not.

Trust evidence, not tone.

The delivery style does not determine the quality of the idea.

Trust your own discomfort.

If something sounds perfect, simple, or definitive, it is probably incomplete.

Trust limits.

Machines have them.

Humans have them.

Pretending otherwise helps no one.

10. Confidence is not going away. So you will have to adapt.

Tech companies will not design AI to be less confident.

It goes against the business model.

Bold answers feel authoritative.

They keep users on the page.

They feel like progress.

And so you will continue to be surrounded by fluent, confident machines that can provide an endless stream of answers.

Your job is not to stop them.

Your job is to stop assuming the most confident voice in the room is the most informed.

Humans have centuries of practice ignoring this wisdom.

AI gives you a new chance to learn it.

Take the chance.

Because the future will not be shaped by the smartest machines.

It will be shaped by the humans who refuse to mistake confidence for truth.

And if you can learn that, maybe — just maybe — you will be the first generation in history to use tools that reflect you without becoming tools yourselves.