“It’s just a tool.”

This sentence is delivered the way people say “no offense” right before causing some. Calm. Reasonable. Technically correct in the most useless way possible.

Yes, AI is a tool. So was the assembly line. So was the printing press. So was the spreadsheet. Tools shape behavior. They change incentives. They reward some habits and punish others. Calling them neutral does not make that disappear. It just lets the speaker step out of the conversation early.

The neutrality claim is not about accuracy. It is about comfort.

Why Neutral Sounds Responsible

Neutrality feels grown-up. It signals balance. It lets people sound thoughtful without committing to anything messy.

When someone says AI is neutral, what they usually mean is that they do not want to be blamed for what they do with it. Responsibility gets relocated to the tool, the model, the data, the company, or the future version that will surely fix everything.

Neutrality is a rhetorical exit ramp.

It allows us to talk about impact without ownership and power without authorship. It is a neat trick, and it works because it sounds reasonable while dissolving accountability.

Tools Are Not Neutral Because Humans Are Not

Every tool embeds assumptions. About efficiency. About scale. About what counts as success.

AI tools reward speed, volume, and surface-level coherence. That does not make them evil. It makes them aligned with a culture that already prefers output over judgment.

When a tool consistently nudges behavior in a certain direction, pretending it is neutral becomes absurd. The incentives are visible. The patterns repeat. The outcomes converge.

If you use a tool that favors speed, you will produce more. If you use one that favors compression, you will simplify. If you use one that removes friction, you will skip pauses that used to force decisions.

Those changes are not accidental. They are the point.

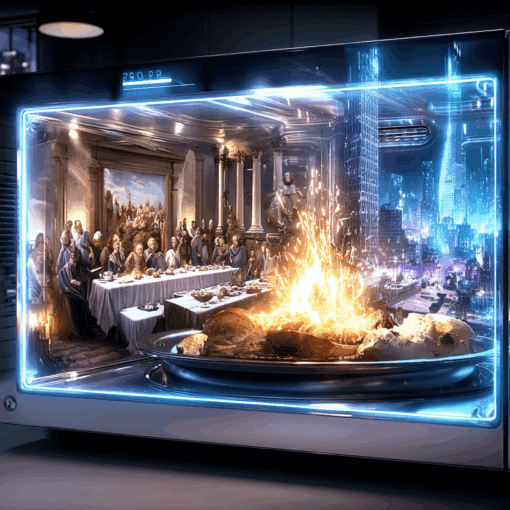

The Assembly Line Fallacy

We have been here before. The assembly line was celebrated as neutral efficiency. It did not care what it produced. It just made production faster.

What it actually did was reshape labor, identity, and expectations. Work became modular. People became interchangeable. Output became the primary metric of value.

None of that required malice. It required scale.

AI follows the same logic, but with cognition instead of muscle. It breaks tasks into fragments, optimizes for throughput, and recombines results in ways that look coherent enough to pass casual inspection.

Calling this neutral is like calling gravity optional.

Neutrality as a Way to Avoid Taste

There is another reason the neutrality myth persists. Taste is uncomfortable.

Taste requires saying no. It requires rejecting outputs that are fine but wrong. It requires standing behind decisions that cannot be justified by metrics alone.

If a tool is neutral, then taste becomes optional. You can publish whatever comes out and blame the system if it falls flat. You can ship faster and call it progress.

The neutrality claim protects people from the embarrassment of discernment.

The Moral Thread We Keep Dropping

Every act of creation carries a moral thread, whether acknowledged or not. Choices imply values. Defaults imply priorities.

When we insist that AI is neutral, we cut that thread on purpose. We treat outcomes as accidents rather than consequences.

Bias is not a glitch. It is the accumulation of decisions. Homogenization is not a bug. It is what happens when optimization favors sameness.

These are not reasons to panic. They are reasons to pay attention.

The Real Question We Refuse to Ask

Instead of asking whether AI is neutral, we should be asking what it rewards.

Does it reward speed or care. Does it reward conformity or risk. Does it reward amplification or reflection.

Tools make answers to those questions visible over time. Not in theory. In behavior.

If a system consistently pushes work toward a particular shape, pretending that shape is accidental is a choice.

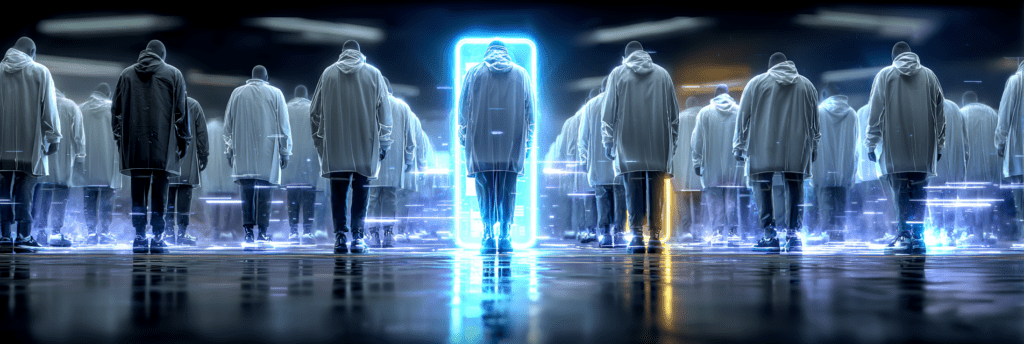

Responsibility Is Not a Feature

People keep waiting for responsibility to be built into the tool. Ethics as a setting. Judgment as a checkbox.

That will not happen, and it should not.

Responsibility lives with the user because responsibility is contextual. It depends on intent, audience, and consequence. No model can supply that without replacing the human entirely.

If that sounds uncomfortable, good. It should.

Neutrality Is a Luxury Belief

Neutrality is easy to claim when the outcomes do not affect you. When your work is insulated. When scale benefits you more than it harms you.

For everyone else, tools are never neutral. They shape who gets heard, who gets hired, and whose work disappears into averages.

Pretending otherwise is not cautious. It is convenient.

What Accountability Actually Looks Like

Accountability does not mean refusing tools. It means owning their effects.

It means acknowledging that faster is not always better. That coherence is not insight. That volume can drown meaning.

It means using AI where it extends thought, not where it replaces it. And knowing the difference.

Stop Hiding Behind the Tool

AI did not introduce the desire to avoid responsibility. It just made avoidance easier.

The neutrality myth survives because it flatters us. It lets us feel modern without being deliberate.

But tools do not absolve. They reveal.

If you want neutral, do nothing.

If you want impact, accept authorship.

The tool will follow.