If your AI output feels bland, generic, or disappointing, the problem probably isn’t the tool. It’s the absence of clear intent, real decisions, and anything solid to work against.

Human Behavior

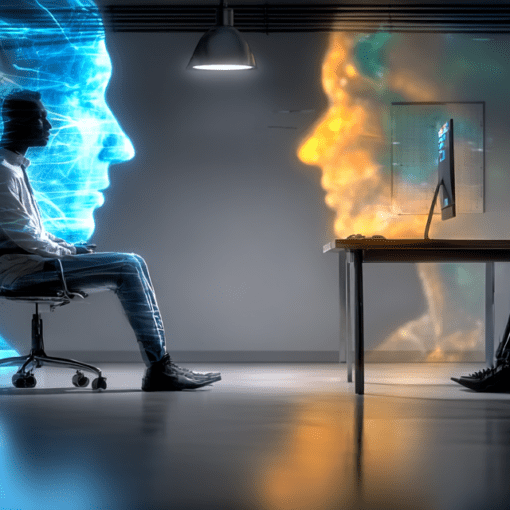

We talk about AI as if it’s an unstoppable force arriving from the future. But inevitability is a story we tell ourselves when we don’t want to examine the choices we’re already making. The real shift isn’t coming. It’s happening quietly, one decision at a time.

People say they’re afraid of AI becoming too intelligent. But what really unsettles them is how familiar its thinking looks. Pattern-matching, repetition, borrowed confidence. The machine didn’t invent that behavior. It mirrored it.

Calling AI a neutral tool sounds responsible, but it is mostly a way to step out of the conversation. Tools shape behavior, reward certain choices, and quietly dissolve accountability when no one claims authorship. Neutrality is not caution. It is convenience.

AI didn’t make our work shallow. It just exposed how often thinking had already been replaced by speed, repetition, and the illusion of productivity. When judgment disappears from the process, the output can look impressive and still mean nothing at all.

Everyone is thinking. Everyone is reacting. Everyone is very busy having opinions.

Strangely, very little of this activity results in understanding.