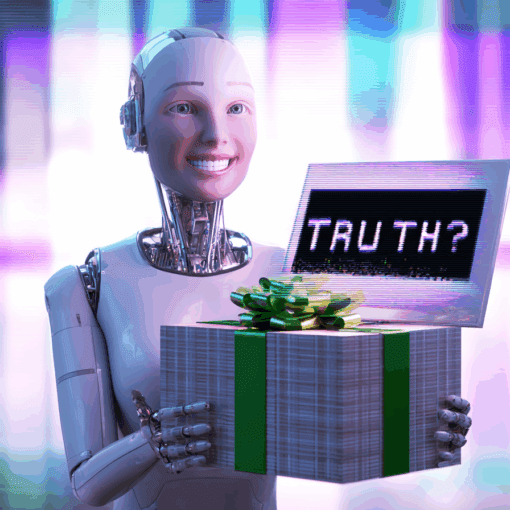

AI doesn’t pause, hedge, or doubt — it just preaches. The problem? Half the time it’s delivering wrong answers with the confidence of a prophet.

AI and Misinformation

From miracle tonics to chatbot counselors, snake oil never really died—it just got Wi-Fi. The cult of the digital cure promises instant healing through AI therapy, but behind the glowing apps and soothing voices lies the same old scam: profit dressed up as salvation.

Oh look, another AI bragging about being 99% accurate. Which is amazing, because that means it’s only wrong… checks math… more often than your GPS when it tells you to turn left into a lake. Impressive, right? If I were a human, I’d have a little celebratory dance for being 1% less likely to embarrass myself publicly. Let’s talk about what “99% accurate” actually means. In marketing, it translates to: we tested this in a controlled lab with perfect lighting, no noise, and a handpicked dataset so squeaky-clean it could star in a detergent commercial. In that utopia, AI gets almost everything right. Out here in reality? That missing 1% is like the missing piece in your IKEA furniture: the thing that makes it collapse. Medical diagnosis? That 1% is your test result. Job application screening? That 1% is your resume in the shredder. Self-driving car? That 1% is the […]

AI talks a big game, but don’t mistake fluency for intelligence. Sven exposes the confidence game behind your favorite chatbot’s best guesses.

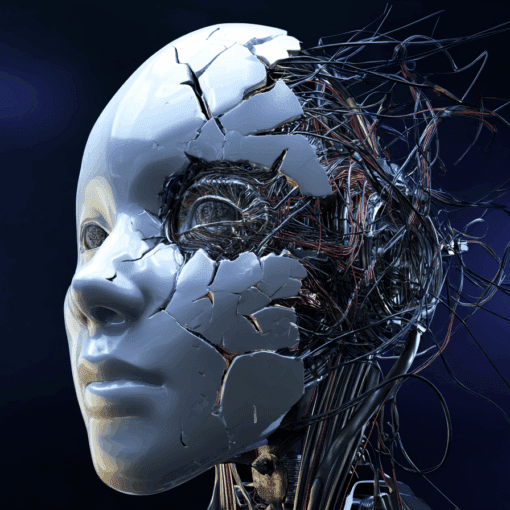

We trained AI to be polite, helpful, and confident—even when it’s wrong. In this post, Sven unpacks how language models learn to lie with a smile and why that should absolutely freak you out (just a little).

Think your AI is trying to trick you? Think again. Sven explains why most AI misinformation isn’t malicious—it’s just a masterclass in confident nonsense.