Once upon a time, ignorance was simple. You could be wrong in peace. You could sit in a café, declare confidently that bats were birds, and no one would fact-check you until the next day’s paper. Now, ignorance comes with analytics. It’s measured, optimized, and graphed in real time.

We live in the golden age of information and the iron age of understanding. Every device in your home is a spy that doubles as a therapist. Every app you use offers “insights” about your habits, as if your phone were an anthropologist documenting your descent into distraction. We’ve traded curiosity for correlation.

The modern creed is simple: if you can measure it, it matters. The unmeasurable is inconvenient, so we pretend it doesn’t exist. Feelings are turned into “metrics,” art becomes “content,” and curiosity gets renamed “engagement.” Meaning is not dead; it’s just been rebranded into something with a dashboard.

The Church of Numbers

Data has become our favorite religion because it gives the illusion of certainty without the burden of interpretation. Once you have numbers, you no longer have to think — you can calculate. That’s far more comforting than ambiguity.

We trust analytics like ancient people trusted omens. The high priests of this new faith are data scientists, and their prophecies come in PowerPoint form. The charts descend from on high, glowing with confidence, and we nod as if we understand.

The tragedy is not that we have too much data. It’s that we have forgotten how to be skeptical of it. We think “big data” means “big insight,” when in truth it usually means “big noise.”

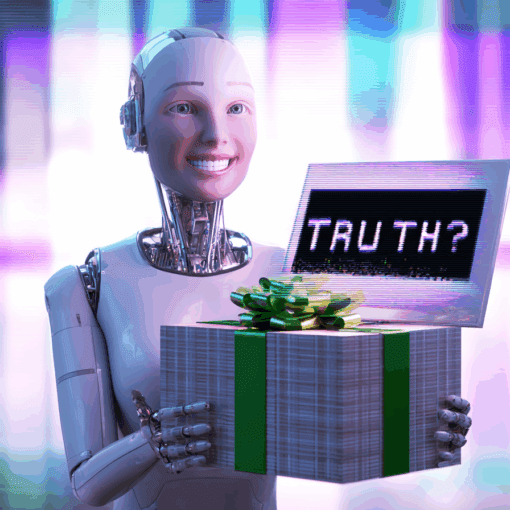

Ask an AI model why people buy what they buy, and it will deliver a thousand correlations dressed as causes. It can tell you that users who click on inspirational quotes at midnight are 23 percent more likely to purchase weighted blankets, but it can’t tell you why. The “why” has been statistically deprecated.

The Death of Context

In the rush to quantify the world, context was the first casualty. A dataset is a story stripped of plot and emotion, leaving only nouns and numbers. What we once called interpretation, we now call bias — as though removing human judgment were the same as achieving truth.

The irony is that without judgment, data has no meaning. It’s like language without grammar: technically present, functionally useless.

The more we automate interpretation, the more we confuse prediction with understanding. Knowing that a person will buy an umbrella tomorrow doesn’t mean you know anything about rain.

And yet, this predictive obsession has infected every corner of life. Employers track productivity “scores.” Students are reduced to “performance indicators.” Even dating apps rely on compatibility percentages to quantify affection. Love, apparently, can now be A/B tested.

The Myth of Objectivity

One of the oldest lies in technology is that numbers don’t lie. They do. They just do it quietly.

Data reflects the biases of those who collect it, clean it, and interpret it. If the system rewards engagement, the data will favor outrage. If the model is trained on popularity, mediocrity will look like truth.

But no one wants to hear that, because it punctures the fantasy of control. We cling to our dashboards as if they were moral compasses. If the metrics go up, we feel validated. If they go down, we feel lost. That’s not analysis — that’s astrology with better fonts.

The myth of objectivity is convenient because it absolves us of responsibility. You don’t have to wrestle with ethics if the graph says you’re doing great.

The Data Delusion

Data is supposed to help us understand the world. Instead, it has become a mirror that reflects our confusion back at scale.

We measure everything and learn nothing. Our social feeds are calibrated to show us what we already believe. Our news is filtered by sentiment analysis. Our attention is auctioned off to whoever can weaponize curiosity most efficiently.

We no longer live in an information age — we live in an interpretation crisis. There’s more to read, less to understand, and an infinite supply of dashboards to prove it.

The promise of data was that it would make humanity smarter. The reality is that it made our ignorance faster.

Consider the algorithmic echo chamber. Every recommendation system is an attention loop pretending to be a teacher. It shows us what we already like and then applauds us for liking it again. Over time, the algorithm becomes less a guide and more a mirror with a monetization strategy.

That’s why misinformation thrives so easily. The system isn’t built to prioritize truth. It’s built to prioritize persistence. The longer something keeps you looking, the more valuable it becomes — regardless of whether it’s real.

The Comfort of Stupidity

Humans love certainty because thinking hurts. Big data offers a world where thinking is optional. You don’t need to interpret the world — the dashboard will do it for you.

We have built tools so powerful that they can analyze human behavior at scale, and yet we use them to decide what movie to watch next. The tools outgrew the users. The telescope is in the hands of a child who just likes the shine.

The real problem isn’t artificial intelligence. It’s artificial confidence. We built machines that can process everything, and then we believed they could understand it too.

Understanding still requires something no model can simulate: doubt.

The Return of Ignorance

Here’s the twist. For all the talk of big data and algorithmic insight, the future might belong to the small mind — the one willing to stay uncertain.

The ability to say “I don’t know” may turn out to be the last truly human skill. Machines can simulate knowledge endlessly, but they cannot experience humility.

When everything can be quantified, the most radical act is to care about what can’t.

Wisdom begins where measurement ends. But good luck getting a grant for that.