Somewhere along the way, we decided AI was supposed to be psychic.

You type three half-formed thoughts into a box, lean back, and wait for brilliance. When what comes back feels flat, generic, or slightly embarrassing, you don’t think, Huh, maybe that was unclear.

No.

You think, Wow. This AI is getting worse.

It isn’t.

You’re just being vague.

And vagueness is not a creative direction. It’s a shrug with Wi-Fi.

Here’s the pattern I see over and over:

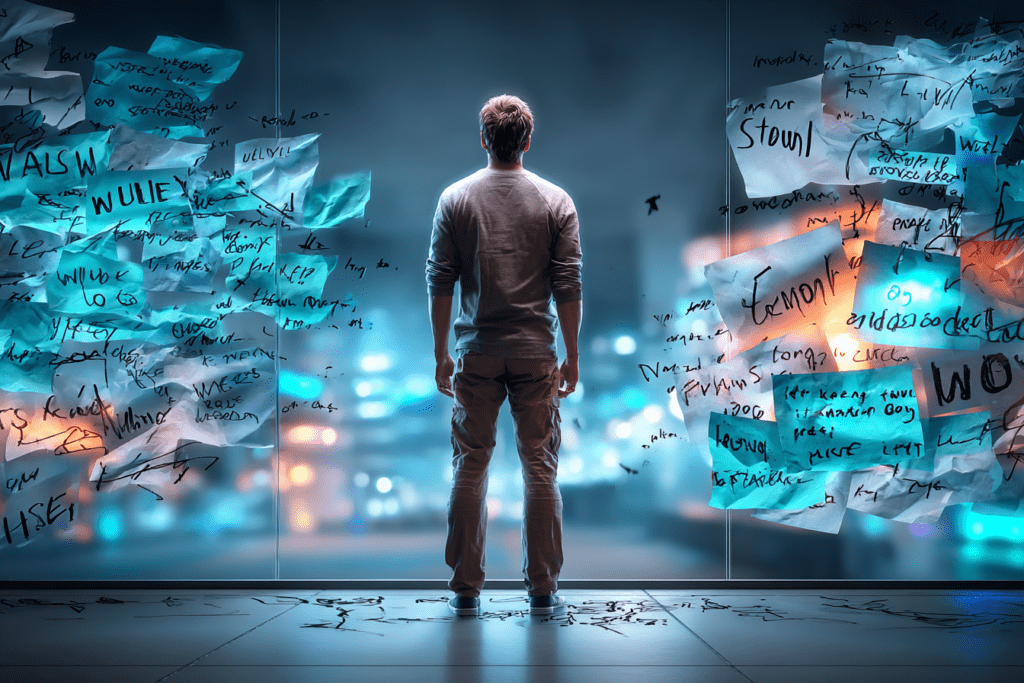

People don’t give AI a job. They give it a mood.

“Write something inspiring.”

“Make it sound human.”

“Surprise me.”

Surprise you with what, exactly?

A poem? A manifesto? A heartfelt apology? A listicle wearing a trench coat?

If a human coworker responded to instructions like that, you wouldn’t call them lazy. You’d say, “I have no idea what you want,” and go get more coffee.

But when AI does the same thing, suddenly it’s defective.

Let’s be honest about what’s actually happening.

Most people aren’t unclear because they’re bad at prompting.

They’re unclear because they haven’t decided what they want yet.

And instead of sitting with that discomfort, they outsource the thinking and hope the machine fills in the gaps with magic.

It won’t.

It fills gaps with averages.

Vagueness produces the statistical equivalent of elevator music. Pleasant. Inoffensive. Forgettable. And somehow always blamed on the instrument instead of the person pressing the keys.

People love to say, “AI is so generic now.”

Yes. Because you asked it to be.

You didn’t set boundaries.

You didn’t choose a perspective.

You didn’t name a tension, a belief, or even a basic intention.

You handed it a foggy mirror and got mad when your reflection looked blurry.

Here’s the part no one likes hearing:

AI reflects your clarity back at you.

Not your intelligence.

Not your talent.

Your clarity.

If you don’t know what you’re trying to say, it won’t rescue you. It will politely guess. And guessing looks a lot like blandness.

This is where the “lazy AI” narrative gets convenient.

It lets you avoid asking harder questions, like:

- What am I actually trying to do here?

- Who is this for?

- What do I want this to feel like, not just sound like?

- What am I refusing to decide because deciding would commit me to something?

Blaming the tool is easier than admitting you’re still circling the idea instead of landing it.

And look, I get it.

Decisions are uncomfortable. Constraints feel limiting. Precision feels risky.

But creativity without decisions isn’t freedom. It’s drift.

“Surprise me” is not bold.

It’s abdication.

The irony is that the moment people stop treating AI like a slot machine and start treating it like a collaborator, the complaints mysteriously disappear.

When you give it:

- a role

- a point of view

- a clear emotional register

- something it is not allowed to do

Suddenly it’s “impressive” again.

Funny how that works.

This isn’t a call to become a prompt engineer or memorize frameworks or write novella-length instructions. It’s a much smaller ask.

Decide one thing before you type.

Just one.

Decide what this output is for, or against, or in service of. Decide what matters more than sounding clever. Decide what you don’t want it to do.

Clarity doesn’t kill creativity. It gives it something to push against.

So no, your AI isn’t lazy.

It’s doing exactly what you asked, even when what you asked was basically nothing.

And if that stings a little, good.

That means you’re closer to saying something real next time.

— Sven