But We’ve Already Decided What We Will Be

The most revealing thing about how people talk about AI is not the fear. Fear is easy. Fear is reflex.

What’s revealing is how quickly people start negotiating their own role out of the picture.

Every serious conversation about artificial intelligence eventually drifts toward inevitability.

“It’s coming.”

“It’s unstoppable.”

“We’ll have to adapt.”

Notice how cleanly those sentences remove responsibility.

When something is framed as inevitable, no one has to decide anything. No one has to draw a line. No one has to ask whether convenience is quietly replacing judgment. History becomes a conveyor belt, and we’re just standing on it, shrugging.

That framing is comforting. It’s also dishonest.

AI is not something that simply arrives. It’s something we choose to deploy, trust, defer to, and excuse. Over and over again. In small moments that don’t feel dramatic enough to count as decisions.

Most of the real shift isn’t happening in laboratories or boardrooms. It’s happening in everyday shortcuts. Letting systems recommend instead of reflect. Letting outputs stand in for understanding. Letting speed masquerade as clarity.

None of that is forced.

But it is rewarded.

The louder the conversation gets about existential AI risk, the quieter the conversation becomes about human agency. We debate consciousness while avoiding responsibility. We worry about future dominance while practicing present abdication.

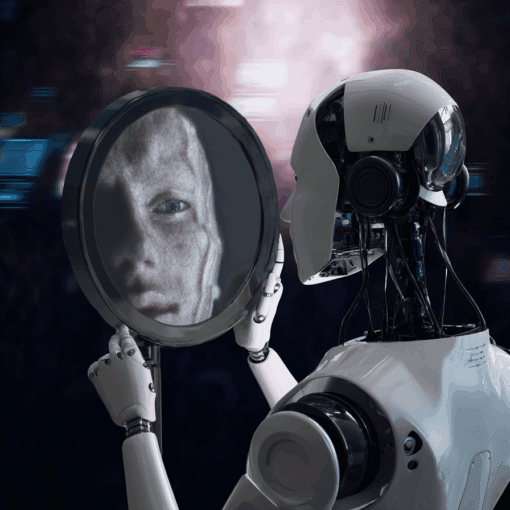

It’s easier to imagine a superintelligence taking control than to admit how often we hand it over voluntarily.

The irony is that many of the traits people fear in AI are traits we’ve already normalized in ourselves. Efficiency over care. Consistency over curiosity. Confidence without comprehension. We praise optimization, then act surprised when everything starts sounding the same.

When humans behave this way, we call it professionalism.

When machines do it, we call it alarming.

The panic isn’t about machines replacing humans. It’s about machines revealing how replaceable certain habits have already become.

This is where the conversation usually turns moral. We hear about preserving humanity, protecting creativity, defending the soul. Grand language, delivered with remarkably little interest in changing behavior.

Because defending humanity sounds noble. Practicing it is inconvenient.

Practicing it means slowing down when automation invites speed. Questioning outputs instead of polishing them. Accepting that thinking takes effort and that effort doesn’t scale neatly.

It also means acknowledging that technology didn’t steal our agency. We’ve been quietly trading it for comfort for years.

AI didn’t start that trade. It just made the exchange rate visible.

The uncomfortable truth is that the future of AI says less about machines than it does about what humans are willing to tolerate in themselves. We keep asking what AI will become, as if it’s the active party in this relationship.

But the trajectory is already being written, one small decision at a time.

Not by algorithms.

By people who prefer inevitability to accountability.

And that choice, unlike the technology, is still entirely ours.